idea运行HDFS报错,windows环境也需要安装Hadoop环境吗

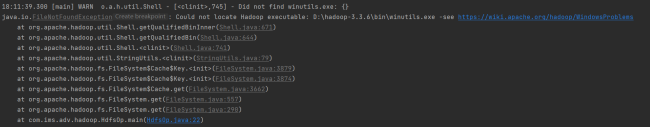

11:30:09.696 [main] WARN o.a.h.util.Shell - [<clinit>,745] - Did not find winutils.exe: {}

java.io.FileNotFoundException: java.io.FileNotFoundException: HADOOP_HOME and hadoop.home.dir are unset. -see https://wiki.apache.org/hadoop/WindowsProblems

at org.apache.hadoop.util.Shell.fileNotFoundException(Shell.java:600)

at org.apache.hadoop.util.Shell.getHadoopHomeDir(Shell.java:621)

at org.apache.hadoop.util.Shell.getQualifiedBin(Shell.java:644)

at org.apache.hadoop.util.Shell.<clinit>(Shell.java:741)

at org.apache.hadoop.util.StringUtils.<clinit>(StringUtils.java:79)

at org.apache.hadoop.fs.FileSystem$Cache$Key.<init>(FileSystem.java:3879)

at org.apache.hadoop.fs.FileSystem$Cache$Key.<init>(FileSystem.java:3874)

at org.apache.hadoop.fs.FileSystem$Cache.get(FileSystem.java:3662)

at org.apache.hadoop.fs.FileSystem.get(FileSystem.java:557)

at org.apache.hadoop.fs.FileSystem.get(FileSystem.java:290)

at com.ims.adv.hadoop.HdfsOp.main(HdfsOp.java:21)

Caused by: java.io.FileNotFoundException: HADOOP_HOME and hadoop.home.dir are unset.

at org.apache.hadoop.util.Shell.checkHadoopHomeInner(Shell.java:520)

at org.apache.hadoop.util.Shell.checkHadoopHome(Shell.java:491)

at org.apache.hadoop.util.Shell.<clinit>(Shell.java:568)

... 7 common frames omitted

11:30:09.836 [main] WARN o.a.h.u.NativeCodeLoader - [<clinit>,60] - Unable to load native-hadoop library for your platform... using builtin-java classes where applicable

org.apache.hadoop.security.AccessControlException: Permission denied: user=26016, access=WRITE, inode="/":root:supergroup:drwxr-xr-x

at org.apache.hadoop.hdfs.server.namenode.FSPermissionChecker.check(FSPermissionChecker.java:506)

at org.apache.hadoop.hdfs.server.namenode.FSPermissionChecker.checkPermission(FSPermissionChecker.java:346)

at org.apache.hadoop.hdfs.server.namenode.FSPermissionChecker.checkPermission(FSPermissionChecker.java:242)

at org.apache.hadoop.hdfs.server.namenode.FSDirectory.checkPermission(FSDirectory.java:1943)

at org.apache.hadoop.hdfs.server.namenode.FSDirectory.checkPermission(FSDirectory.java:1927)

at org.apache.hadoop.hdfs.server.namenode.FSDirectory.checkAncestorAccess(FSDirectory.java:1886)

at org.apache.hadoop.hdfs.server.namenode.FSDirWriteFileOp.resolvePathForStartFile(FSDirWriteFileOp.java:323)

at org.apache.hadoop.hdfs.server.namenode.FSNamesystem.startFileInt(FSNamesystem.java:2685)

at org.apache.hadoop.hdfs.server.namenode.FSNamesystem.startFile(FSNamesystem.java:2625)

at org.apache.hadoop.hdfs.server.namenode.NameNodeRpcServer.create(NameNodeRpcServer.java:807)

at org.apache.hadoop.hdfs.protocolPB.ClientNamenodeProtocolServerSideTranslatorPB.create(ClientNamenodeProtocolServerSideTranslatorPB.java:496)

at org.apache.hadoop.hdfs.protocol.proto.ClientNamenodeProtocolProtos$ClientNamenodeProtocol$2.callBlockingMethod(ClientNamenodeProtocolProtos.java)

at org.apache.hadoop.ipc.ProtobufRpcEngine2$Server$ProtoBufRpcInvoker.call(ProtobufRpcEngine2.java:621)

at org.apache.hadoop.ipc.ProtobufRpcEngine2$Server$ProtoBufRpcInvoker.call(ProtobufRpcEngine2.java:589)

at org.apache.hadoop.ipc.ProtobufRpcEngine2$Server$ProtoBufRpcInvoker.call(ProtobufRpcEngine2.java:573)

at org.apache.hadoop.ipc.RPC$Server.call(RPC.java:1227)

at org.apache.hadoop.ipc.Server$RpcCall.run(Server.java:1094)

at org.apache.hadoop.ipc.Server$RpcCall.run(Server.java:1017)

at java.security.AccessController.doPrivileged(Native Method)

at javax.security.auth.Subject.doAs(Subject.java:422)

at org.apache.hadoop.security.UserGroupInformation.doAs(UserGroupInformation.java:1899)

at org.apache.hadoop.ipc.Server$Handler.run(Server.java:3048)

at sun.reflect.NativeConstructorAccessorImpl.newInstance0(Native Method)

at sun.reflect.NativeConstructorAccessorImpl.newInstance(NativeConstructorAccessorImpl.java:62)

at sun.reflect.DelegatingConstructorAccessorImpl.newInstance(DelegatingConstructorAccessorImpl.java:45)

at java.lang.reflect.Constructor.newInstance(Constructor.java:423)

at org.apache.hadoop.ipc.RemoteException.instantiateException(RemoteException.java:121)

at org.apache.hadoop.ipc.RemoteException.unwrapRemoteException(RemoteException.java:88)

at org.apache.hadoop.hdfs.DFSOutputStream.newStreamForCreate(DFSOutputStream.java:286)

at org.apache.hadoop.hdfs.DFSClient.create(DFSClient.java:1271)

at org.apache.hadoop.hdfs.DFSClient.create(DFSClient.java:1250)

at org.apache.hadoop.hdfs.DFSClient.create(DFSClient.java:1232)

at org.apache.hadoop.hdfs.DFSClient.create(DFSClient.java:1170)

at org.apache.hadoop.hdfs.DistributedFileSystem$8.doCall(DistributedFileSystem.java:569)

at org.apache.hadoop.hdfs.DistributedFileSystem$8.doCall(DistributedFileSystem.java:566)

at org.apache.hadoop.fs.FileSystemLinkResolver.resolve(FileSystemLinkResolver.java:81)

at org.apache.hadoop.hdfs.DistributedFileSystem.create(DistributedFileSystem.java:580)

at org.apache.hadoop.hdfs.DistributedFileSystem.create(DistributedFileSystem.java:507)

at org.apache.hadoop.fs.FileSystem.create(FileSystem.java:1233)

at org.apache.hadoop.fs.FileSystem.create(FileSystem.java:1210)

at org.apache.hadoop.fs.FileSystem.create(FileSystem.java:1091)

at org.apache.hadoop.fs.FileSystem.create(FileSystem.java:1078)

at com.ims.adv.hadoop.HdfsOp.main(HdfsOp.java:29)

Caused by: org.apache.hadoop.ipc.RemoteException(org.apache.hadoop.security.AccessControlException): Permission denied: user=26016, access=WRITE, inode="/":root:supergroup:drwxr-xr-x

at org.apache.hadoop.hdfs.server.namenode.FSPermissionChecker.check(FSPermissionChecker.java:506)

at org.apache.hadoop.hdfs.server.namenode.FSPermissionChecker.checkPermission(FSPermissionChecker.java:346)

at org.apache.hadoop.hdfs.server.namenode.FSPermissionChecker.checkPermission(FSPermissionChecker.java:242)

at org.apache.hadoop.hdfs.server.namenode.FSDirectory.checkPermission(FSDirectory.java:1943)

at org.apache.hadoop.hdfs.server.namenode.FSDirectory.checkPermission(FSDirectory.java:1927)

at org.apache.hadoop.hdfs.server.namenode.FSDirectory.checkAncestorAccess(FSDirectory.java:1886)

at org.apache.hadoop.hdfs.server.namenode.FSDirWriteFileOp.resolvePathForStartFile(FSDirWriteFileOp.java:323)

at org.apache.hadoop.hdfs.server.namenode.FSNamesystem.startFileInt(FSNamesystem.java:2685)

at org.apache.hadoop.hdfs.server.namenode.FSNamesystem.startFile(FSNamesystem.java:2625)

at org.apache.hadoop.hdfs.server.namenode.NameNodeRpcServer.create(NameNodeRpcServer.java:807)

at org.apache.hadoop.hdfs.protocolPB.ClientNamenodeProtocolServerSideTranslatorPB.create(ClientNamenodeProtocolServerSideTranslatorPB.java:496)

at org.apache.hadoop.hdfs.protocol.proto.ClientNamenodeProtocolProtos$ClientNamenodeProtocol$2.callBlockingMethod(ClientNamenodeProtocolProtos.java)

at org.apache.hadoop.ipc.ProtobufRpcEngine2$Server$ProtoBufRpcInvoker.call(ProtobufRpcEngine2.java:621)

at org.apache.hadoop.ipc.ProtobufRpcEngine2$Server$ProtoBufRpcInvoker.call(ProtobufRpcEngine2.java:589)

at org.apache.hadoop.ipc.ProtobufRpcEngine2$Server$ProtoBufRpcInvoker.call(ProtobufRpcEngine2.java:573)

at org.apache.hadoop.ipc.RPC$Server.call(RPC.java:1227)

at org.apache.hadoop.ipc.Server$RpcCall.run(Server.java:1094)

at org.apache.hadoop.ipc.Server$RpcCall.run(Server.java:1017)

at java.security.AccessController.doPrivileged(Native Method)

at javax.security.auth.Subject.doAs(Subject.java:422)

at org.apache.hadoop.security.UserGroupInformation.doAs(UserGroupInformation.java:1899)

at org.apache.hadoop.ipc.Server$Handler.run(Server.java:3048)

at org.apache.hadoop.ipc.Client.getRpcResponse(Client.java:1567)

at org.apache.hadoop.ipc.Client.call(Client.java:1513)

at org.apache.hadoop.ipc.Client.call(Client.java:1410)

at org.apache.hadoop.ipc.ProtobufRpcEngine2$Invoker.invoke(ProtobufRpcEngine2.java:258)

at org.apache.hadoop.ipc.ProtobufRpcEngine2$Invoker.invoke(ProtobufRpcEngine2.java:139)

at com.sun.proxy.$Proxy9.create(Unknown Source)

at org.apache.hadoop.hdfs.protocolPB.ClientNamenodeProtocolTranslatorPB.create(ClientNamenodeProtocolTranslatorPB.java:383)

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.lang.reflect.Method.invoke(Method.java:498)

at org.apache.hadoop.io.retry.RetryInvocationHandler.invokeMethod(RetryInvocationHandler.java:433)

at org.apache.hadoop.io.retry.RetryInvocationHandler$Call.invokeMethod(RetryInvocationHandler.java:166)

at org.apache.hadoop.io.retry.RetryInvocationHandler$Call.invoke(RetryInvocationHandler.java:158)

at org.apache.hadoop.io.retry.RetryInvocationHandler$Call.invokeOnce(RetryInvocationHandler.java:96)

at org.apache.hadoop.io.retry.RetryInvocationHandler.invoke(RetryInvocationHandler.java:362)

at com.sun.proxy.$Proxy10.create(Unknown Source)

at org.apache.hadoop.hdfs.DFSOutputStream.newStreamForCreate(DFSOutputStream.java:280)

... 14 more

Process finished with exit code 0

想问问老师这个报错是什么情况

正在回答 回答被采纳积分+1

从这个错误日志来看有2个问题

1:HADOOP_HOME and hadoop.home.dir are unset.

这个问题一般不会影响程序的执行,想要解决的话这样做

首先在windows中的D盘里面解压一下hadoop-3.2.0对应的安装包(就是在linux虚拟机中使用的那个hadoop安装包),解压之后是这样的:

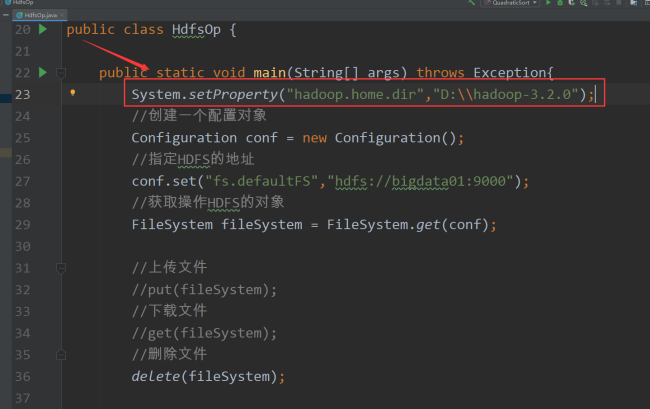

然后在java操作hdfs的代码中添加一行内容:

System.setProperty("hadoop.home.dir","D:\\hadoop-3.2.0");这一行代码放到这个位置

2:Caused by: org.apache.hadoop.ipc.RemoteException(org.apache.hadoop.security.AccessControlException): Permission denied: user=26016, access=WRITE, inode="/":root:supergroup:drwxr-xr-x

这个问题是集群权限问题,课程中讲到了这个问题的解决方案,需要修改集群中的配置,并且需要重启集群才能生效。

相似问题

登录后可查看更多问答,登录/注册

恭喜解决一个难题,获得1积分~

来为老师/同学的回答评分吧

0 星